Blog

Semantic Response Caching for On-Premises LLM APIs: Cutting Cost Without Sending Data Offsite

How embedding-based similarity caching works on private infrastructure, when it is worth the complexity, and how to handle invalidation and privacy.

Beyond exact string matching

Traditional caches key responses by exact prompt text or hashed prompts. That helps for repeated API calls from scripts and retries, but end users rarely phrase questions identically. Semantic caching stores responses keyed by embedding similarity: if a new question is close enough in vector space to a prior question, the system can return the stored answer and skip a full model forward pass.

On-premises deployments care about this pattern for two reasons. First, GPU time is a finite internal budget; avoiding redundant inference directly preserves capacity for tasks that genuinely need the model. Second, keeping embeddings and cache stores inside your perimeter aligns with data residency expectations, provided you design the cache so it does not become an ungoverned copy of sensitive answers.

Architecture sketch

A typical flow computes an embedding for the incoming prompt using an embedding model you host locally. The vector is compared against entries in a vector index backed by infrastructure you operate, such as pgvector inside PostgreSQL, Milvus, Qdrant, or another self-managed store. Each index entry points to a cached response payload and metadata: model version, adapter ID, temperature, and timestamps.

If similarity exceeds a configured threshold and auxiliary checks pass, the gateway returns the cached body. If not, the request proceeds to inference, and the system optionally inserts a new cache row after the response is produced. The embedding model does not need to match the generative model, but its choice affects what counts as “similar,” so changes to embedding models require the same caution as changes to retrieval pipelines.

Thresholds, false positives, and safety

Similarity is not semantic equivalence. A slightly different question can have different compliance implications, especially in regulated workflows. Tune distance thresholds conservatively for high-stakes use cases, and consider requiring higher similarity when tools or structured outputs are involved. For customer-facing chat, pair semantic hits with lightweight validators: for example, ensure cached answers still pass policy filters written for fresh generations.

Namespace caches by tenant, product line, and model configuration. A response produced under one safety profile must not satisfy a request running under another, even if the prompts look alike.

Invalidation and freshness

Caches of natural language answers rot when underlying facts change. Tie cache entries to source document versions when answers depend on RAG, or to explicit time-to-live values for volatile domains. Provide administrative APIs to purge namespaces after policy updates or model upgrades.

When you roll out a new base model or adapter, treat the cache as suspect: either version cache keys with the model revision or flush relevant partitions. Silent mixing of generations produces subtle quality bugs that are hard to diagnose from aggregate metrics alone.

Privacy and data minimization

Stored prompts and answers can be as sensitive as primary application data. Encrypt data at rest, restrict access to cache administration endpoints, and define retention aligned with your broader logging policy. If prompts contain personal data, document whether embeddings constitute additional processing under your privacy framework and whether users can request deletion across cache entries.

For organizations that already run retrieval indexes, consider operational synergies: shared embedding infrastructure, consistent monitoring, and unified backup strategies. Avoid creating a second shadow data platform with weaker controls than your main vector stores.

When not to rely on semantic caching

Low-latency interactive chat with diverse phrasing may see limited hit rates early in adoption. Workloads with heavy tool use or multi-turn state often need cache keys that include session context, which reduces reuse. Treat semantic caching as one lever in a broader efficiency strategy alongside model routing, smaller models for simple intents, and batching for offline jobs—not a universal fix.

Pilot the pattern on a narrow API surface first—internal documentation assistants or repeated analytics queries—where paraphrase overlap is high and the cost of a wrong cache hit is bounded. Instrument hit rate, latency savings, and manual review outcomes before expanding to customer-facing flows.

Measuring success without vanity metrics

Useful dashboards track GPU seconds avoided, cache hit rate segmented by tenant, and incidents tied to stale answers. Qualitative review remains important: schedule periodic sampling of cache hits to confirm that near-duplicate prompts truly deserved the same response. That sampling closes the loop between engineering efficiency and product trust.

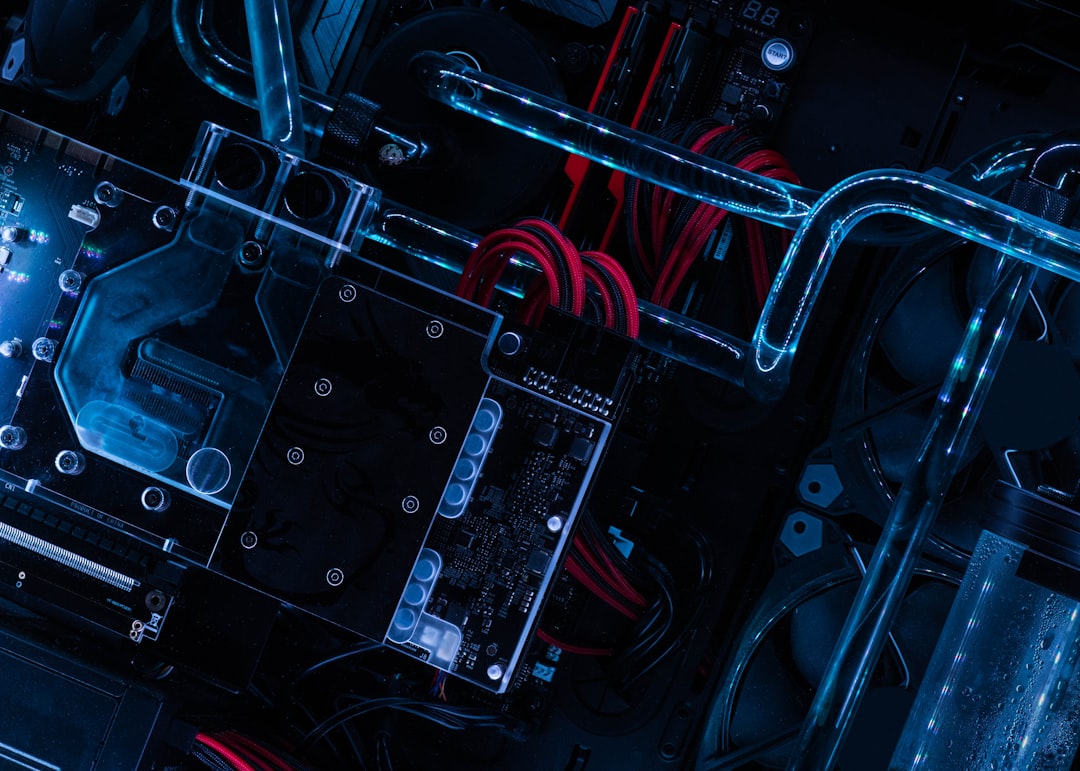

Featured image by Cristiano Firmani on Unsplash.