Blog

LoRA Adapter Promotion Pipelines for On-Premises LLMs: Staging, Compatibility, and Rollback

A practical lifecycle for low-rank adapters on private infrastructure: how to version, validate, and promote LoRA weights without treating them as informal sidecar files.

Why adapters need their own release train

Low-rank adaptation (LoRA) and related parameter-efficient fine-tuning methods let teams specialize a base model for a domain without copying full weights. On paper, that is ideal for on-premises operations: smaller artifacts, faster transfers, and clearer separation between frozen foundation weights and task-specific deltas. In practice, adapters become risky when they are copied onto file shares without provenance, mixed across incompatible base checkpoints, or promoted without the same rigor applied to application code.

A promotion pipeline answers three questions for every adapter: which base model revision it targets, what evidence supports shipping it, and how to roll back if behavior regresses. Without those answers, operations teams cannot reason about incidents, and security reviewers cannot assess data lineage for training material.

Version coupling: adapters are never standalone

An adapter is only valid relative to a specific base model identifier, tokenizer revision, and often a particular quantization profile. Your registry should store a manifest that binds the adapter artifact to that base, similar to how container images reference digests. Serving stacks such as vLLM, TGI, or custom inference services expose different APIs for loading adapters; the manifest should be the single source of truth consumed by those services.

Teams frequently underestimate tokenizer drift. If the base model tokenizer or chat template changes between releases, an adapter may load yet produce degraded or unsafe outputs. Promotion gates should include a check that tokenizer and template metadata match the environment where inference runs.

Staging environments that mirror production pressure

Validation belongs in an environment that uses the same batching, concurrency, and GPU memory pressure as production. Smoke tests on an idle workstation are insufficient. Instead, run representative workloads: typical prompt lengths for your domain, bursty traffic patterns, and multi-tenant scheduling if several teams share the cluster.

Automated checks can include golden prompt suites maintained by product owners, schema validation for structured outputs, and comparative runs against the previous adapter revision. The goal is not perfection on every subjective task; it is detecting unexpected regressions on agreed reference cases before users encounter them.

Promotion, activation, and blue-green patterns

Treat promotion as a controlled traffic shift. A common pattern is to deploy the new adapter revision alongside the previous one, route a small percentage of traffic or a dedicated canary tenant, and expand after observing stability signals. For purely internal APIs, activation can be as simple as updating a configuration pointer in your model registry once checks pass.

Rollback must be one configuration change, not a scramble to find older files. Keep at least one prior adapter revision addressable by ID, with retention policies aligned to your audit requirements. If storage pressure forces deletion, archive manifests and hashes to cold storage first.

Governance, access, and separation of duties

Adapter artifacts are executable behavior. Restrict write access to build pipelines and service accounts; developers may propose changes through pull requests that update training recipes and evaluation notebooks, not by uploading weights directly to production paths. Separate roles for training job submission, artifact signing or approval, and production activation reduce insider risk and operational mistakes.

Document training data scope at a high level: which corpora, time window, and filtering rules applied. That documentation supports privacy reviews and helps downstream teams understand limitations without exposing raw data in the manifest.

When full fine-tunes still enter the picture

Adapters excel when specialization is modular and reversible. Some programs eventually need merged full weights for latency or deployment simplicity, or because serving runtimes simplify single-artifact deployment. Plan that transition explicitly: merging introduces a new base artifact that should pass the same lifecycle gates as any foundation model refresh, not an informal one-off export.

Document the business trigger for that shift—serving cost, operational complexity, or latency budgets—so the decision stays reviewable rather than accidental. Platform teams can then provision artifact storage and CI templates for merged models without scrambling when product teams outgrow adapter-only workflows.

Operational handover between data science and platform teams

Clear handoff artifacts reduce pager noise. Training notebooks should export a reproducible recipe: random seeds, dataset identifiers, hyperparameters, and evaluation summaries. Platform engineers need a concise runbook for loading adapters in production, health checks that fail fast when manifests mismatch, and dashboards that surface adapter-specific error rates distinct from base model errors.

When incidents occur, responders should be able to answer whether the problem is adapter-specific, base-model-specific, or shared infrastructure, without downloading weights to a laptop. That discipline is what makes LoRA economical at scale instead of turning every hotfix into an emergency merge.

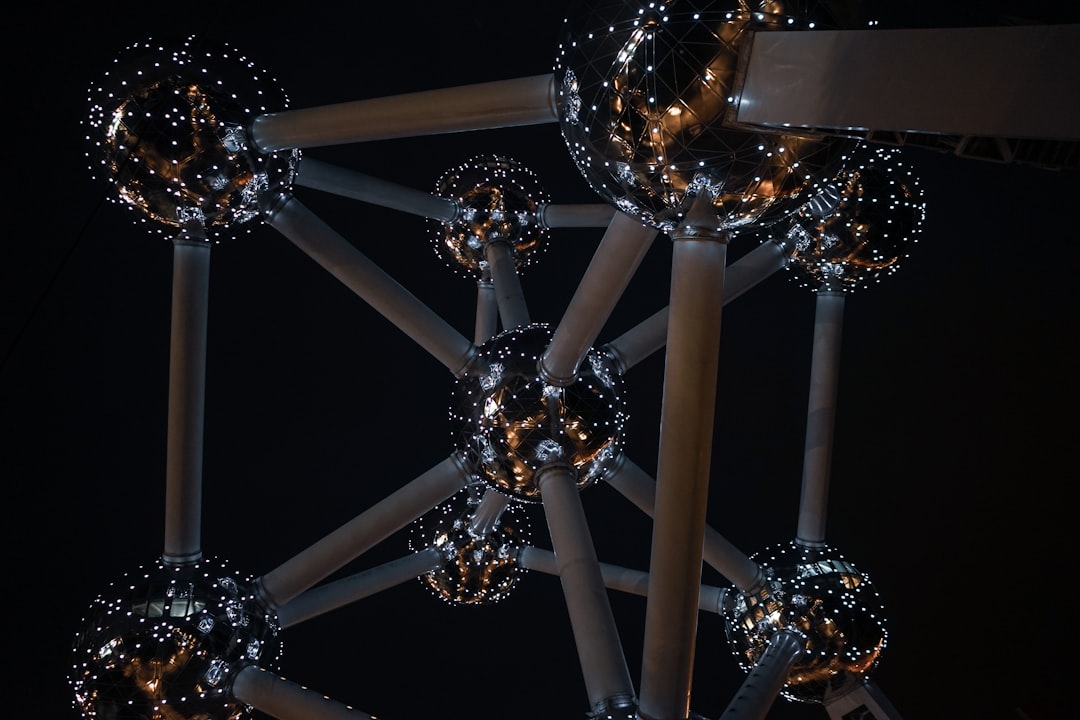

Featured image by henri buenen on Unsplash.